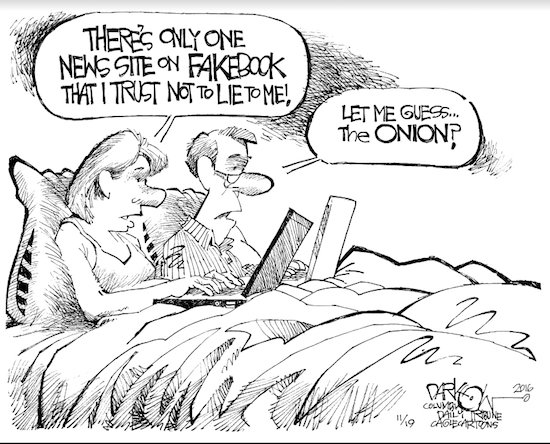

OPINION: How curated articles could help Facebook fight fake news

In an attempt to curtail fake news on its network, Facebook could be eyeing a tool that would push stories from established, valid sources onto its users, according to sources close to the project.

As fake news stories have spread across the internet, some have pointed the blame at social media sites like Facebook, where users have nearly unfettered access to share unchecked information with large audiences. Facebook’s founder and chief executive Mark Zuckerberg has previously argued that the site is meant to serve as a tech company rather than a media entity, and has deflected responsibility for the consequences of fake news posts — many of which favored President-elect Donald Trump over his challenger Hillary Clinton in the 2016 presidential election — onto others.

But now, the company seems to be paving the way for others to follow in a new direction, one that aims to cut back on fake news and provide its users with reliable and valuable content.

Brooklyn Boro

View MoreNew York City’s most populous borough, Brooklyn, is home to nearly 2.6 million residents. If Brooklyn were an independent city it would be the fourth largest city in the United States. While Brooklyn has become the epitome of ‘cool and hip’ in recent years, for those that were born here, raised families here and improved communities over the years, Brooklyn has never been ‘uncool’.